Malicious COVID-19 online content—including racist messages, disinformation and misinformation—thrives and spreads online by bypassing the moderation efforts of individual social media platforms, according to a new research study led by George Washington University Professor of Physics Neil Johnson and published in the journal Scientific Reports.

By mapping online hate clusters across six major social media platforms, Dr. Johnson and a team of researchers revealed how malicious content exploits pathways between platforms, highlighting the need for social media companies to rethink and adjust their content moderation policies.

The research team set out to understand how and why malicious content thrives so well online despite significant moderation efforts, and how it can be stopped. They used a combination of machine learning and network data science to investigate how online hate communities wielded COVID-19 as a weapon and used current events to draw in new followers.

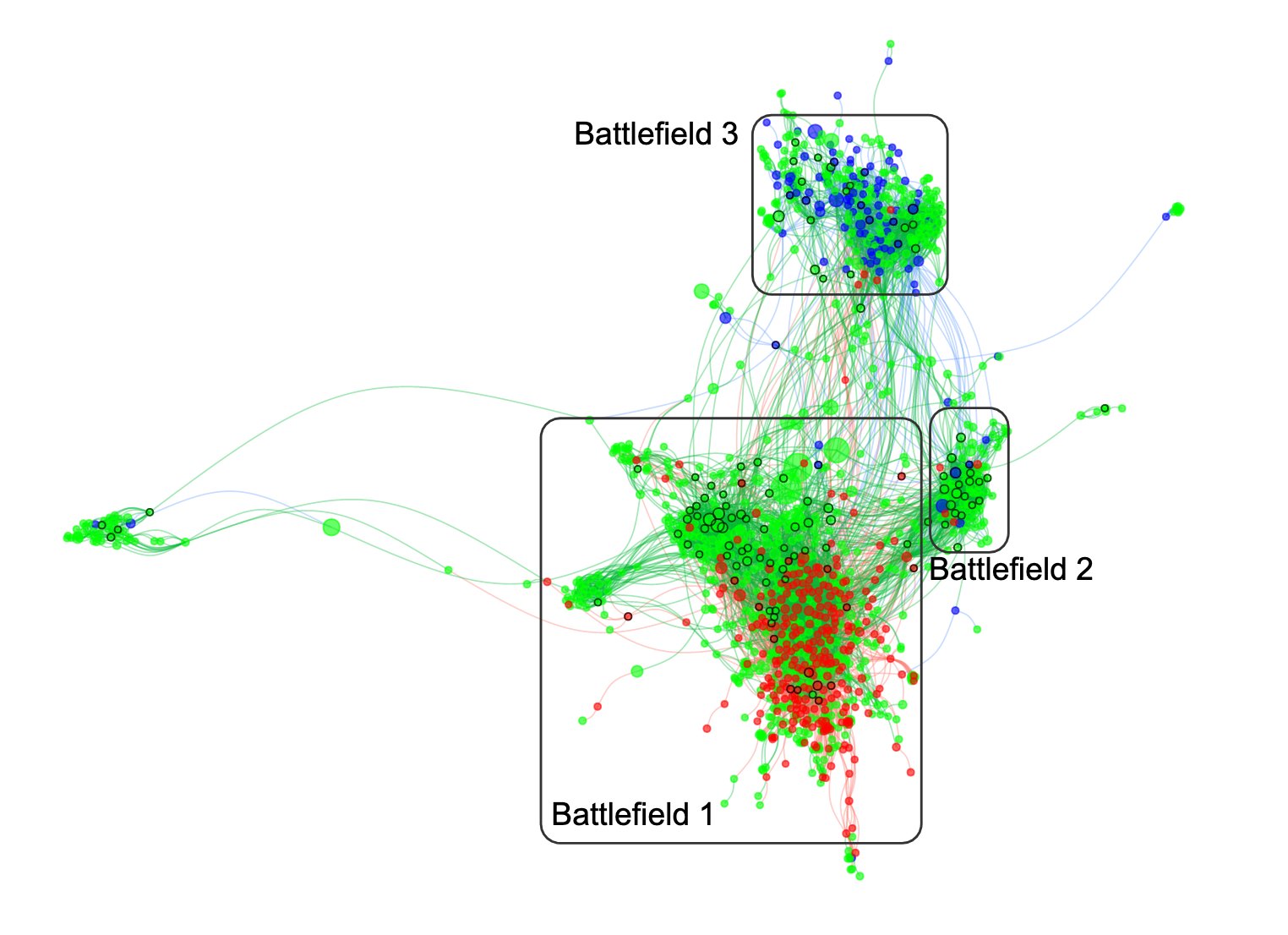

“Until now, slowing the spread of malicious content online has been like playing a game of whack-a-mole, because a map of the online hate multiverse did not exist,” said Dr. Johnson, who is also a researcher at the GW Institute for Data, Democracy & Politics. “You cannot win a battle if you don’t have a map of the battlefield. In our study, we laid out a first-of-its-kind map of this battlefield. Whether you’re looking at traditional hate topics, such as anti-Semitism or anti-Asian racism surrounding COVID-19, the battlefield map is the same. And it is this map of links within and between platforms that is the missing piece in understanding how we can slow or stop the spread of online hate content.”

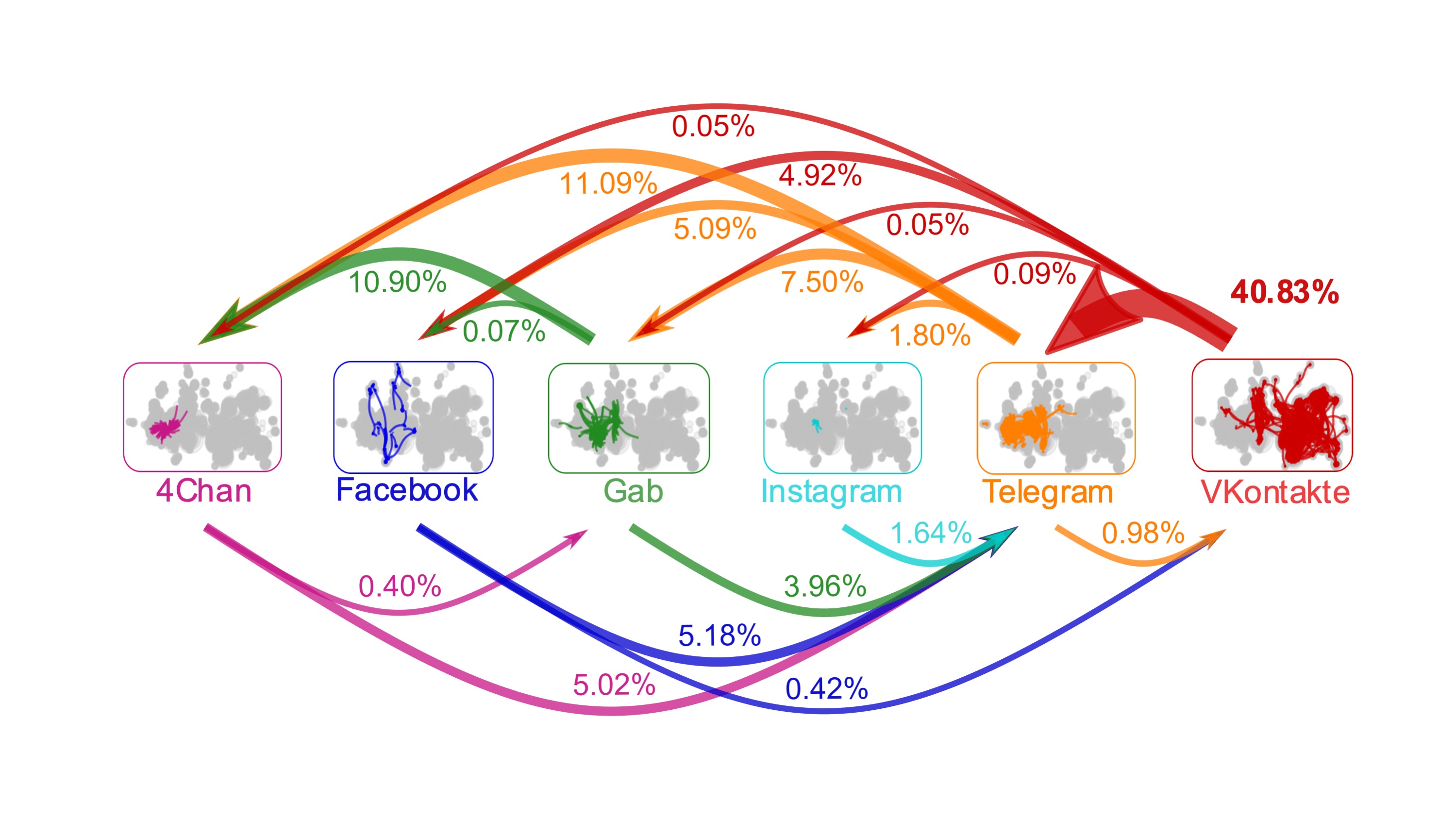

The researchers began by mapping how hate clusters interconnect to spread their narratives across social media platforms. Focusing on six platforms—Facebook, VKontakte, Instagram, Gab, Telegram and 4Chan—the team started with a given hate cluster and looked outward to find a second cluster that was strongly connected to the original. They found the strongest connections were VKontakte into Telegram (40.83 percent of cross-platform connections), Telegram into 4Chan (11.09 percent) and Gab into 4Chan (10.90 percent).

The researchers then turned their attention to identifying malicious content related to COVID-19. They found that the coherence of COVID-19 discussion increased rapidly in the early phases of the pandemic, with hate clusters forming narratives and cohering around COVID-19 topics and misinformation.

To subvert moderation efforts by social media platforms, groups sending hate messages used several adaptation strategies in order to regroup on other platforms or reenter a platform once they are banned, the researchers found. For example, clusters frequently changed their names to avoid detection by moderators’ algorithms, such as typing “vaccine” as “va$$ine.” Similarly, anti-Semitic and anti-LGBTQ clusters simply add strings of 1’s or A’s before their name.

“Because the number of independent social media platforms is growing, these hate-generating clusters are very likely to strengthen and expand their interconnections via new links and will likely exploit new platforms that lie beyond the reach of the U.S. and other Western nations’ jurisdictions,” Dr. Johnson said. “The chances of getting all social media platforms globally to work together to solve this are very slim. However, our mathematical analysis identifies strategies that platforms can use as a group to effectively slow or block online hate content.”

Based on their findings, the team, which included researchers at Google, suggested several ways for social media platforms to slow the spread of malicious content:

- Lengthen artificially the pathways that malicious content must take between clusters, increasing the chances of its detection by moderators and delaying the spread of time-sensitive material such as weaponized COVID-19 misinformation and violent content.

- Control the size of an online hate cluster’s support base by placing a cap on the size of clusters.

- Introduce non-malicious, mainstream content in order to effectively dilute a cluster’s focus.

“Our study demonstrates a similarity between the spread of online hate and the spread of a virus,” said Yonatan Lupu, an associate professor of political science at GW and co-author on the study. “Individual social media platforms have had difficulty controlling the spread of online hate, which mirrors the difficulty individual countries around the world have had in stopping the spread of the COVID-19 virus.”

Going forward, the research team already is using their map and its mathematical modeling to analyze other forms of malicious content, including the weaponization of COVID-19 vaccine misinformation. They are also examining the extent to which single actors, including foreign governments, may play a more influential or controlling role in this space than others.